We all learnt a lot from Tech Camp 2021, but even after 49 events over 12 days there’s still so much more to discover about the evolving place of tech in libraries. Tech Camp Plus picks up where Tech Camp left off, continuing fascinating conversations long after we’ve rolled up our mouse pads and stowed away our headsets. We’ve invited speakers back to expand on their original content and, importantly, to answer all those questions that occurred to you after their original presentation.

Sessions are run as live webinars, running for 45 minutes with ample time allowed for live Q&A. Same speaker, same topic, more detail.

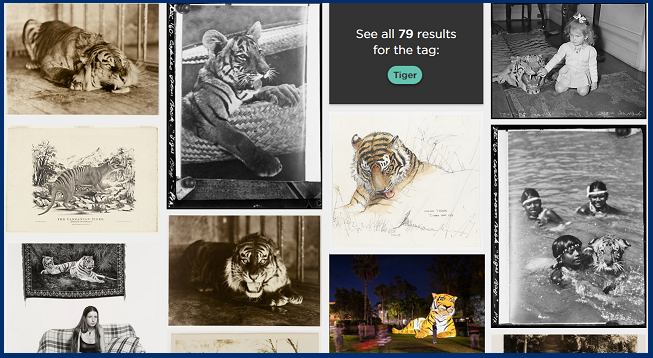

Topic: Tr-AI-ning the TIGER: lessons from SLNSW’s evolving image tagging project

Further reading and Q&A with Jenna:

A few examples of the use of AI/ML in the cultural sector:

- https://inculture.microsoft.com/arts/met-microsoft-mit-ai-open-access-hack/

- https://www.smh.com.au/technology/the-social-robot-that-could-help-save-indigenous-languages-20180601-p4ziyj.html

- http://recognition.tate.org.uk/

- https://experiments.withgoogle.com/collection/ai

Some AI/ML services you can experiment with:

- https://cloud.google.com/vision/

- https://brandfolder.com/workbench/ai-auto-tagging

- https://imagga.com/auto-tagging-demo

- https://azure.microsoft.com/en-us/services/cognitive-services/speech-to-text

Do you have any information on AI in heritage spaces to share?

Justin Kelly is doing some interesting work re AI for heritage collections – https://justin.kelly.org.au/using-ai-to-automatically-transcribe-1890s-handwritten-jenkins-diaries-from-the-state-library-of-victoria/

Would you please share how you set up the tag service?

The Library has paid subscriptions to the three tagging services that we use. The workflow for uploading our collections to be tagged is complex because it is integrated with our ingestion of files into the Library catalogue. Essentially, you will need a developer to set up a portal for you where you can upload your files to the various tagging services, and then you’ll need a way to access the data output once your images have been tagged. All the services we use have excellent developer documentation available on their website that will help understand the possibilities. I’ve also linked a few services above where you can upload a few sample files to test results with your own collections.

Did you do any experimentation with adding up percentages of tags that share a common stem, for example ‘photograph album’ and ‘photographic paper’, which might give both terms a combined higher weighting?

No, in the first phase of developing the TIGER algorithm we didn’t experiment with any Natural Language Processing (NLP) techniques for keywords that had confidence ratings much lower than our accepted thresholds. We are definitely using these type of techniques though for the second phase of the project creating hierarchical taxonomies of linked terms.

Can you / do you contribute data back to the three services you use to improve the tagging?

No, not at this stage, though we would love to! We don’t have any ability to control the training conducted for the algorithms of the services that we pay to use. But when we eventually start doing more work in training our own custom algorithms, we’ll have much more control over data inputs and outputs.

_____________________________________________________________________________________

The State Library of NSW holds one of Australia’s largest cultural collections and has over 5 million open access digital files available for research and reuse. The Library recently released its new Digital Collections interface that for the first time allows users to browse digital photographs, artworks, audio files, objects, books, manuscripts and much more in a simple and visually engaging way.

Providing access to a collection of this size with almost 200 years of often inconsistent or incomplete metadata and the outputs of a 10-year digitisation program is a unique challenge and in the past it has been difficult to provide meaningful experiences for users wanting to explore the depths of the collection. The new Digital Collections interface attempts to address these challenges using a variety of techniques, including the use of artificial intelligence and machine learning algorithms for description, tagging and transcription, crowdsourcing and digital volunteering efforts and custom-built digital viewers.

In this presentation we’ll share how the Library’s TIGER image tagging project was developed, the ongoing testing and experimentation guided by user needs that has helped refine machine-generated data and see a preview of the next phase of the image tagging project that will make the Library’s digital collections even more discoverable. There will also be discussion about the importance of recognising – and where possible counteracting – inherent biases of machine learning technologies.

This talk is an extended version of Jenna’s VALA TechEX talk from April 2021 and takes a more detailed look at the State Library’s Digital Collections, TIGER project and other applications of machine learning.

Speaker: Jenna Bain, State Library of New South Wales

Jenna is the State Library of NSW’s Digital Projects Leader who works on a wide range of projects that aim to improve access to and engagement with the Library’s collections. Jenna hopes to make a positive contribution to the Australian cultural heritage landscape by exploring opportunities for meaningful connections between community and GLAM institutions through technology.

Who should attend: GLAM sector staff – librarians, digital practitioners, data scientists and tinkerers.

This Tech Camp Plus event is free to attend. Please be advised this presentation will be recorded.

____________________________________________________________________________________________________________

VENUE

Online Zoom Webinar

FREE to attend for members and non-members.

Registration is essential. Registration will be confirmed via email.

CANCELLATIONS

Those seeking to cancel a registration are encouraged to contact the VALA Secretariat asap – registration numbers are limited for this event, and we appreciate prior advice if you are unable to attend. Please contact the VALA Secretariat (E: vala@vala.org.au or Tel: 03 9844 2933).

DATE & TIME

Wednesday 27 October 2021, 12.00 midday – 12.45pm AEDT.

Double trouble: VALA + AI4LAM

Join us and our friends at AI4LAM for presentations on AI in the GLAMR sector over two consecutive days.

Computer vision and automation for CSIRO National Research Collections

Learn about CSIRO’s use of computational approaches to expand and extend their scientific work and digital curation practices working with Australia’s National Research Collections.

Tuesday, October 26, 2021

1:00 – 2:00pm AEDT

Register for the AI4LAM presentation here.